Apparently Gross Government Overreach Stops at Child Trafficking

It's horrifying—but important—information to have.

For years, Americans have been told that at least two things are as inevitable as death and taxes:

Government and tech companies can track and trace every computer click we make.

Government and tech companies are entirely powerless to stop crimes against children—many of which are planned, committed, or shared online.

Sort of seems incongruous, doesn’t it? We have the most monitored communications system in human history—and yet the one thing we absolutely cannot locate is a pedophile with a Wi-Fi connection.

It’s… weirdly convenient.

During a Senate hearing this week titled Lost and Exploited: Confronting Child Trafficking and the Failure to Protect America’s Most Vulnerable, football legend Tim Tebow—founder of the Tim Tebow Foundation, which works to combat child trafficking and sexual exploitation, God bless him—shared some jaw-dropping stats with hearing chair Josh Hawley that should make every human being on the planet stop mid-scroll.

In 2023 alone, 104,370,572 images and videos of suspected child abuse were reported in the United States. One hundred and four million. That’s not a statistic—it’s evidence. But numbers that massive can almost stop sounding real—which, unfortunately, is exactly what makes them easy to ignore.

So, they know this material exists… but they can’t take it down? My Instagram post sharing the CDC’s own vaccine-injury data from 2023—the one that got my entire account nuked within hours—is deeply offended.

Tebow next displayed a map of the U.S. showing 338,000 unique IP addresses of individuals who are downloading, sharing, or distributing child sexual abuse images—almost all featuring victims under the age of twelve—in one single six-month period.

“We know that 55 to 85 percent of [these users] are hands-on offenders,” Tebow continued, “and we also know that the average offender has 13 victims in their lifetime.” And yet—somehow—the system that can flag and remove your tweet for using the wrong pronoun or questioning vaccine gospel by lunchtime is powerless to shut this down?

Forgive me if I’m calling bullshit a tiny bit skeptical.

We are constantly told (threatened?) that the internet is heavily monitored. We learned from Edward Snowden and Julian Assange that intelligence agencies collect staggering amounts of digital data on citizens. Governments claim they can track terror networks, disrupt cyberattacks, and detect threats using sophisticated monitoring tools. Tech companies routinely assure Congress they are investing billions into AI moderation. Your email provider can scan attachments for viruses in milliseconds. Your phone can identify your face in the dark while you’re half asleep. But tens of millions of images of child abuse?

“Yeah, we got nothing.”

Really? If the government truly has the surveillance capabilities it claims, you would think there might be some automatic tripwire for this stuff. A trigger phrase. A digital alarm bell. Type certain words into a search bar and—boom—an agency somewhere at least takes a look. (Many U.S. schools already use monitoring software that flags searches for phrases like “how to build a bomb” or “school shooting plan,” sometimes alerting administrators—and even police—within hours.)

That, of course, raises the quintessential argument: wouldn’t that violate our rights? In the United States, the First Amendment protects speech, and the Fourth Amendment protects citizens from unreasonable searches. You generally can’t be investigated simply for plugging something into Google. And to a large extent that’s a good thing, because a government that monitors every thought experiment, research attempt, or morbid curiosity would be terrifying.

But should pedophiles—the most vile of criminals—get the benefit of the same protections designed to safeguard ordinary citizens? And what about the sites hosting and distributing this material? How are they allowed to remain operational?

No one wants a world where typing “how does cyanide work?” triggers a knock on the door from Homeland Security. That would be dystopian. But if someone Googles some version of that thirty-seven times—along with “how to dispose of a corpse” and “tips for embalming a body at home”—it doesn’t seem unreasonable that the system might raise a flag.

To be fair, the problem isn’t purely technical negligence. Some of this material spreads through encrypted services where companies claim they cannot see what users are sharing. But surely there’s a middle ground between complete digital prison and 104 million images of child abuse freely floating around the internet.

Alas, here’s the paradox: The same system that insists it cannot proactively monitor criminal activity—ostensibly out of respect for constitutional ethics—somehow manages to throttle controversial political topics, shadow-ban accounts, and remove inconvenient posts at lightning speed.

At the same Senate hearing, the mother of a child trafficking survivor (the details of which are too horrifying to share or, frankly, even imagine) testified that she has spent years begging tech companies to remove unthinkable images of her daughter that continue circulating online. Her conclusion? The companies don’t do anything because stopping it really eats into their profit margins.

Naturally—because America—there’s a law in place (Section 230 of the Communications Decency Act) that basically says online platforms aren’t legally liable for content posted by their users. It’s one of the reasons social media companies have grown into trillion-dollar ecosystems. The same law also allows platforms to remove content they consider objectionable (ivermectin, anyone?). In other words, they get to enjoy the legal protections of being a neutral platform while exercising the editorial power of a publisher.

Critics argue the protection has removed incentives to aggressively police the worst content. Supporters say removing it would mean every single post would have to be pre-screened to avoid liability, which would essentially break the internet.

I’m starting to think that might be a good thing.

Because at this point, the problem clearly isn’t capability. We know what these systems can do. We see it every day. Algorithms can detect a copyrighted song in the background of a video in seconds. Platforms can remove a post for using a trigger word before it’s gotten a single view. Entire accounts can disappear overnight for stepping outside approved narratives.

So the idea that tens of millions of images of child abuse are simply too difficult to locate, remove, or trace strains credulity—and trust me, I honestly cannot find a nicer or more benign way to say that.

The truth is harder to swallow. It’s not that they can’t find it. It’s that finding—and fixing—it would cost too much, reveal too much, and require them to take responsibility for too much. They’ve shown us, over and over again, that when they really want to enforce something online, they absolutely can. And when they don’t… they don’t.

So what does the average person do with information like this? I wish I knew. My best guess is this: stop accepting the explanation that nothing more can be done. The people running the most powerful information systems ever built would very much like us to believe that this problem is simply too big, too complicated, too technical, too expensive to solve. Maybe it is. But before we accept that, they should probably have to prove it.

And so far, they haven’t.

Am I right? I know you’ll let me know in the comments.

P.S. If you missed this weekend’s Subscriber Spotlight—and you’ve ever even briefly entertained the idea of considering maybe someday hypothetically writing a book—I encourage you to check it out! In the meantime, I hope everyone is dealing with forced sleep deprivation as well as can be expected.

Of course they can and they've had the ability to do so for a very very long time. Why don't they? Because they are part of the child trafficking. That's why the Epstein case was and is clearly never going to be fully investigated.

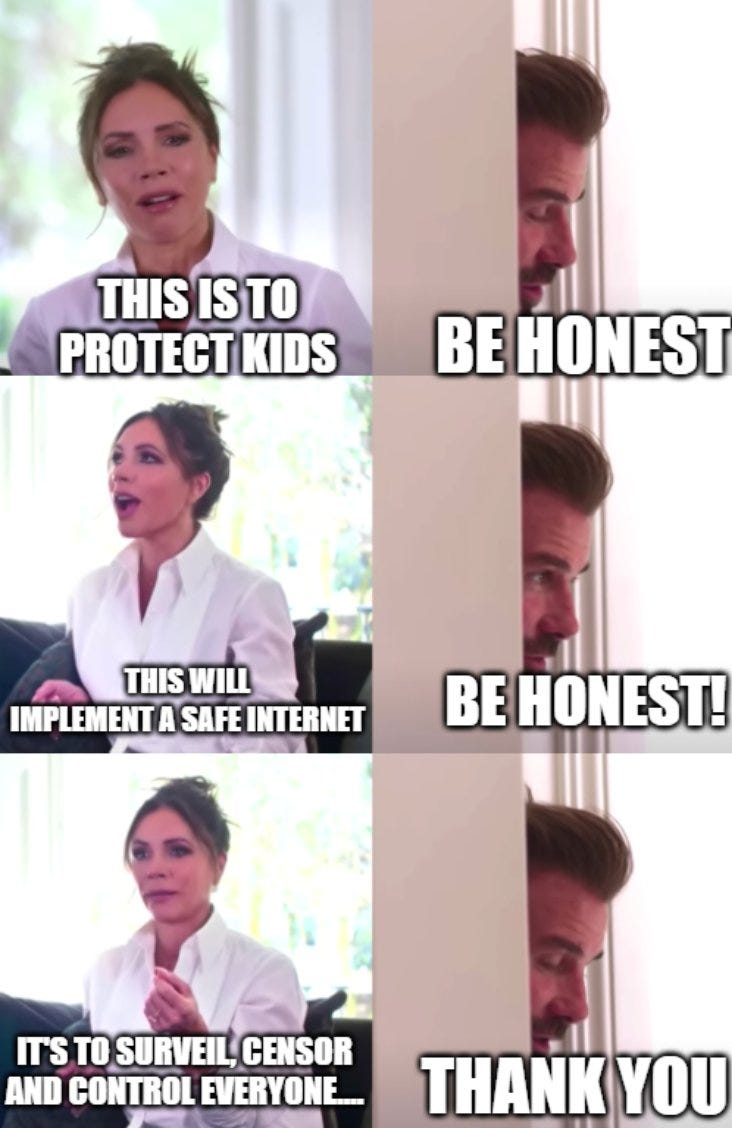

But now "they" might care about child trafficking because they want to legally track and control every little thing that we do and say so if you care that much about child trafficking, then you will give up more of your rights.

The guise of protecting children will always be used to take away more of our rights. The idea that algorithms and AI can't be used to stop child trafficking and pedophiles is absurd. It's almost as absurd as believing Epstein killed himself and that the covid shots are safe and effective.

IMO these are the most heinous and taboo crimes known to mankind. Powerful forces are covering it up to protect themselves. The Epstein Files provides the evidence. I will contribute to Tim Tebow’s foundation.